TopToolsforHybridCloudLoadBalancing

2026-04-16

Hybrid cloud load balancing ensures smooth traffic distribution across on-premises, private, and public cloud environments. This approach helps businesses maintain application performance, manage traffic surges, and ensure reliability during cloud migrations or system failures. Here’s a quick look at the top tools and their strengths:

- F5 BIG-IP: Trusted in enterprise environments with advanced Layer 4–7 services, Kubernetes integration, and robust traffic management.

- NGINX Plus: Ideal for microservices and Kubernetes, offering real-time configuration updates and advanced Layer 7 features.

- HAProxy Enterprise: Known for high performance and flexibility, supporting multiple algorithms and strong Kubernetes compatibility.

- AWS Elastic Load Balancing (ELB): A managed service tightly integrated with AWS, offering auto-scaling and support for hybrid setups.

- Google Cloud Load Balancer (GCLB): A global solution with anycast IPs, hybrid connectivity, and AI/ML routing capabilities.

- Azure Application Gateway: Tailored for Azure workloads, featuring Layer 7 routing, WAF integration, and autoscaling.

- Citrix ADC: Flexible deployment options with proximity-based routing and multi-cloud support.

- Traefik: Lightweight and dynamic, designed for containerised environments with real-time updates.

- Kemp LoadMaster: Cost-effective with advanced traffic management and centralised control.

- Loadbalancer.org: Cloud-agnostic with strong multi-cloud consistency and comprehensive Layer 4–7 features.

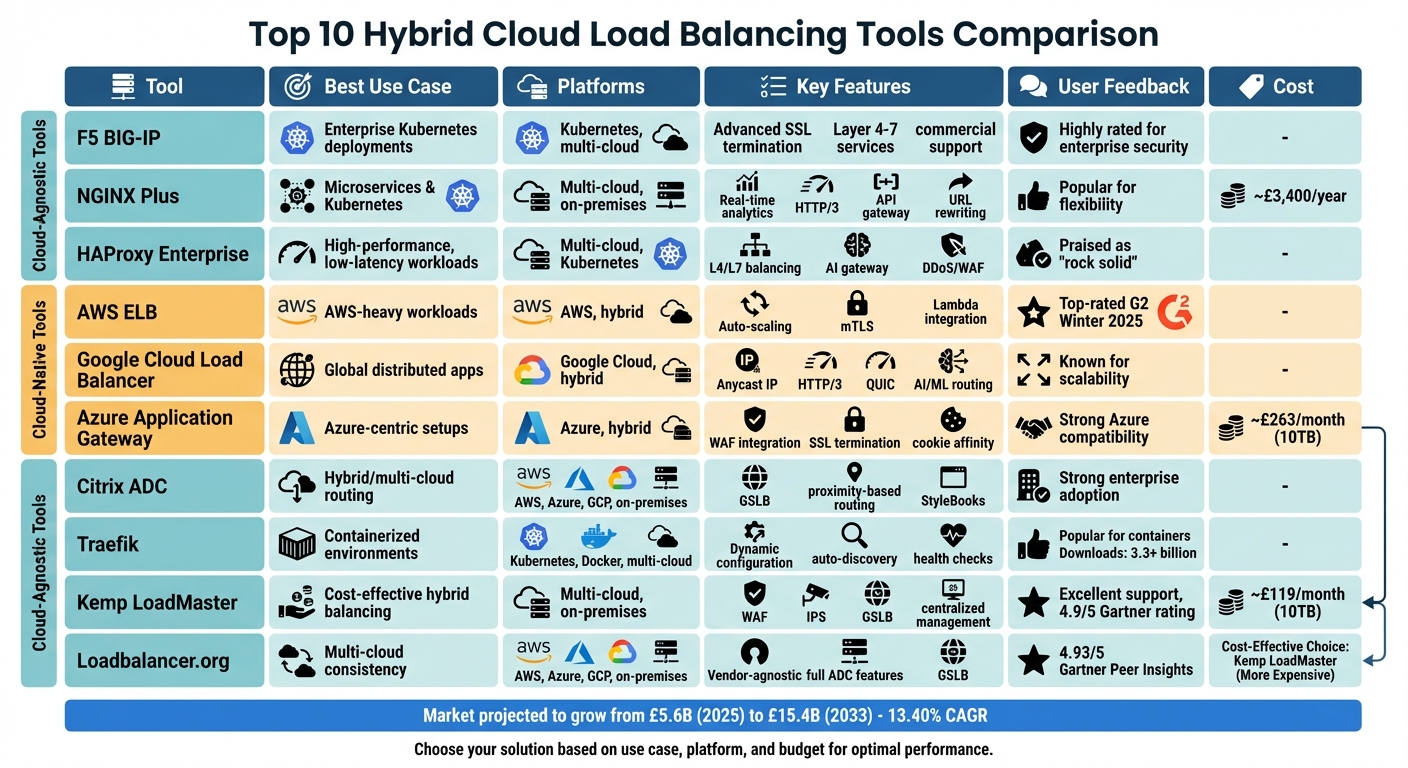

Quick Comparison

| Tool | Best Use Case | Platforms Supported | Key Features | User Feedback |

|---|---|---|---|---|

| F5 BIG-IP | Enterprise Kubernetes deployments | Kubernetes, multi-cloud | Advanced security, SSL termination | Highly rated for enterprise needs |

| NGINX Plus | Microservices & Kubernetes | Multi-cloud, on-premises | Real-time updates, HTTP/3, API gateway | Popular for flexibility |

| HAProxy | High-performance workloads | Multi-cloud, Kubernetes | Advanced routing, low latency | Praised for reliability |

| AWS ELB | AWS-heavy workloads | AWS, hybrid | Auto-scaling, Lambda integration | Strong AWS integration |

| Google GCLB | Global distributed apps | Google Cloud, hybrid | Anycast IP, AI/ML routing | Known for scalability |

| Azure Gateway | Azure-centric setups | Azure, hybrid | WAF, SSL termination, cookie affinity | Strong Azure compatibility |

| Citrix ADC | Hybrid/multi-cloud routing | Multi-cloud, on-premises | GSLB, proximity-based routing | Enterprise adoption |

| Traefik | Containerised environments | Kubernetes, Docker | Dynamic updates, lightweight | Popular for containers |

| Kemp LoadMaster | Cost-effective hybrid balancing | Multi-cloud, on-premises | GSLB, centralised management | Praised for affordability |

| Loadbalancer.org | Multi-cloud consistency | Multi-cloud, on-premises | Vendor-agnostic, advanced Layer 4–7 features | Highly rated for simplicity |

Choose based on your infrastructure, traffic needs, and cloud strategy. Whether you prioritise cost, advanced features, or integration with a specific platform, these tools cater to diverse hybrid cloud architectures.

Hybrid Cloud Load Balancing Tools Comparison: Features, Platforms & Best Use Cases

1. F5 BIG-IP

F5 BIG-IP has been a trusted solution for enterprise application delivery for over 25 years, offering robust support for hybrid and multi-cloud environments. Its reverse proxy architecture ensures consistent Layer 4–7 services across on-premises data centres and cloud platforms like AWS, Azure, and Google Cloud. This approach eliminates the need to overhaul policies when shifting workloads between environments.

Scalability across hybrid and multi-cloud environments

BIG-IP delivers scalability through two key components: BIG-IP DNS and BIG-IP Local Traffic Manager. BIG-IP DNS handles global server load balancing by distributing requests based on service conditions, performance, and geographical location. Meanwhile, BIG-IP Local Traffic Manager optimises traffic within local environments.

For container-based workloads, the platform integrates seamlessly with Kubernetes and OpenShift using BIG-IP Container Ingress Services. This feature dynamically creates protocol and application services to manage traffic for microservices. Additionally, the F5 Automation Toolchain uses declarative interfaces to simplify deployment and configuration, reducing human errors in CI/CD workflows.

Ease of deployment and management

F5 BIG-IP consolidates multiple services - load balancing, DNS, WAF, and access control - into a single platform, helping reduce operational complexity. It supports automation tools like Terraform, Ansible, and cloud-native deployment managers, enabling smoother provisioning processes.

For custom traffic management needs, F5 iRules provide programmability to handle unique challenges, such as mitigating zero-day attacks or replicating specific traffic requests. The platform’s ease of use has earned it accolades, including the 2026 TrustRadius Buyer's Choice Award and the 2023 TrustRadius awards for Best Relationship, Best Feature Set, and Best Value for Price.

Integration with cloud platforms (AWS, Azure, GCP, etc.)

F5 BIG-IP integrates directly with AWS Gateway Load Balancer and Azure Gateway Load Balancer, streamlining the addition of security and application services into cloud traffic flows. This integration also allows horizontal scaling of BIG-IP Virtual Editions based on demand.

For Google Cloud users, F5 offers NGINXaaS, which enhances application delivery and visibility at scale. Specific deployment configurations, such as using 'Network' mode for AWS and Azure Gateway Load Balancers, ensure proper packet handling and compatibility with TMOS v16.1+ and TCP port 24500.

Traffic routing and health monitoring capabilities

F5 BIG-IP employs active health checks and protocol awareness to distribute traffic intelligently, based on the real-time state of applications. By supporting multiple protocols (TCP, UDP, HTTP, HTTPS, ICMP) and offering policy templates, the platform simplifies load balancing processes.

Additionally, live telemetry streaming provides actionable insights for faster problem resolution, while SSL/TLS decryption ensures deep visibility into encrypted traffic. These capabilities make F5 BIG-IP a robust choice for managing hybrid load balancing effectively.

sbb-itb-1051aa0

2. NGINX Plus

After exploring F5 BIG-IP, let’s dive into NGINX Plus, which stands out for its streamlined approach to hybrid cloud environments.

NGINX Plus provides a unified application delivery platform that works seamlessly across on-premises setups, private clouds, and public cloud providers like AWS, Azure, and Google Cloud Platform. This consistency eliminates the complexity of juggling multiple tools, making hybrid deployments far smoother.

Scalability across hybrid and multi-cloud environments

NGINX Plus supports Global Server Load Balancing (GSLB), enabling traffic distribution across multiple geographic regions. This ensures high availability while safeguarding against regional outages. It also enhances built-in cloud load balancers - such as Azure Standard Load Balancer and AWS Network Load Balancer - by adding advanced Layer 7 features like content-based routing and session persistence, complementing the Layer 4 capabilities provided by these cloud platforms.

Additionally, the NGINX Gateway Fabric simplifies service connectivity in Kubernetes environments. It supports active-active high availability and can handle millions of data flows.

Ease of deployment and management

With its REST API for dynamic configuration, NGINX Plus allows you to modify upstream server groups in real-time, eliminating the need for process reloads or service restarts. This is particularly useful in dynamic cloud environments where server instances frequently change.

For added convenience, NGINXaaS on Azure and Google Cloud enables direct deployment via their marketplaces. The NGINX One Console provides centralised SaaS-based management, offering observability, security, and control across all NGINX instances, no matter where they’re deployed. These features earned NGINX the 2024 TrustRadius Top Rated Award for its management capabilities. Such tools are crucial for efficient hybrid cloud operations.

Integration with cloud platforms (AWS, Azure, GCP, etc.)

NGINX Plus integrates seamlessly with Azure Traffic Manager, offering DNS-based global load balancing across regions. For Azure Kubernetes Service, the NGINX Load Balancer for Kubernetes (NLK) simplifies multi-cluster load balancing and failover, dynamically updating configurations through its NLK controller.

On AWS, NGINX Plus works alongside the Network Load Balancer to handle millions of requests per second, enhancing it with Layer 7 capabilities that go beyond the native L4 tools. Deployment automation is also made easier with Packer and Terraform scripts, ensuring consistent configurations across different cloud platforms. These integrations strengthen its traffic management capabilities, which we’ll explore further.

Traffic routing and health monitoring capabilities

Unlike its open-source counterpart, NGINX Plus performs active, application-aware health checks. These checks go beyond basic connection tests to ensure that upstream servers are returning valid application-layer content.

The platform also includes the least_time method, which routes traffic to the server with the quickest response time. For session persistence, it uses a 256-KB shared memory zone to manage state across approximately 128 server pairs, enabling reliable cookie-based session handling.

3. HAProxy Enterprise

HAProxy Enterprise stands out as a tool designed for managing hybrid cloud load balancing with a focus on scalability and simplicity. It offers deployment flexibility across various environments, including bare metal, virtual machines, Kubernetes, and major public clouds like AWS, Azure, and GCP. This approach eliminates vendor lock-in and ensures consistent performance, even under heavy traffic loads, with the ability to handle millions of requests per second and sub-millisecond latency.

Scalability across hybrid and multi-cloud environments

The HAProxy Fusion Control Plane simplifies management for multi-cluster and multi-cloud setups by providing a centralised interface. Kalaiyarasan Manoharan, Senior Staff Network Engineer at PayPal, highlighted its efficiency:

We have multiple HAProxy Enterprise clusters that are distributed across each of the cloud providers... All of those operations and observability are taken care of by HAProxy Fusion.

Unlike traditional hardware appliances that require complex network adjustments, HAProxy Enterprise scales horizontally by adding more instances. Its Global Server Load Balancing (GSLB) feature acts as an authoritative DNS server, distributing traffic across global regions and data centres. The platform also enables automated service discovery, which dynamically updates backend configurations in real-time as Kubernetes pods scale, ensuring seamless operations.

Ease of deployment and management

HAProxy Enterprise is easy to deploy, available as a pre-packaged Amazon Machine Image (AMI) for AWS and through the Azure Marketplace. The platform's Data Plane and Runtime APIs allow for configuration updates and ACL changes without restarting processes, ensuring zero downtime.

Austin Ellsworth, Infrastructure Manager at Weller Truck Parts, shared how HAProxy Fusion simplified their operations:

When you can log into HAProxy Fusion, manage all your clusters from a single location, and have all your log data aggregated, it simplifies your life.

In Winter 2026, the platform was awarded 80 G2 badges, including "Best Results" and "Best Usability" in Enterprise Load Balancing. However, some users mention that its custom configuration language can be challenging for those accustomed to GUI-based tools.

Integration with cloud platforms (AWS, Azure, GCP, etc.)

HAProxy Enterprise integrates seamlessly with leading cloud platforms and modern DevOps workflows. It supports tools like Terraform, Ansible, and Consul via its RESTful Data Plane API. On AWS, for example, nodes can be organised into autoscaling groups through HAProxy Fusion, allowing capacity to adjust automatically based on traffic needs.

As a Kubernetes Ingress Controller, the platform provides advanced Layer 7 routing across multiple clouds, ensuring consistent WAF rules and routing policies in hybrid setups.

Traffic routing and health monitoring capabilities

With over 13 static and dynamic load-balancing algorithms, HAProxy Enterprise supports a wide range of protocols, including HTTP/3, QUIC, gRPC, and MQTT. It routes traffic based on various factors like URLs, HTTP headers, cookies, and client IP addresses.

The platform's health monitoring features include automated application-aware checks and secure HTTPS validation, ensuring traffic is directed only to fully operational servers. The Dynamic Update Module allows for runtime updates to ACLs and routing maps without dropping connections. For database management, the "leastconn" algorithm is particularly useful for evenly distributing queries across read replicas.

With active-active failover, HAProxy Enterprise achieves 99.999% uptime. These capabilities have earned it the top spot in G2's Winter 2026 Enterprise and Overall Grid for Load Balancing.

4. AWS Elastic Load Balancing

AWS Elastic Load Balancing (ELB) is a managed service designed to automatically distribute incoming traffic across various targets. It supports three types of load balancers: Application Load Balancer (ALB) for Layer 7 traffic, Network Load Balancer (NLB) for Layer 4 traffic, and Gateway Load Balancer (GWLB) for Layer 3 virtual appliances. This service bridges AWS cloud capabilities with on-premises resources, making it a flexible option for hybrid environments. For instance, Second Spectrum successfully used ELB to reduce their Kubernetes hosting costs by an impressive 90%.

Scalability across hybrid and multi-cloud environments

ELB is well-suited for hybrid cloud setups, allowing users to register non-AWS resources via IP addresses. This means a single load balancer can manage traffic across both AWS and on-premises infrastructure. For example, Network Load Balancers can connect to on-premises systems using AWS Direct Connect, VPN, or VPC peering. To extend this further, AWS Route 53 can distribute traffic across multiple ELBs in different regions based on latency or weighted routing, while AWS Global Accelerator ensures efficient global traffic distribution.

However, deploying ELBs in multi-cloud environments requires careful adjustment of routing rules, security settings, and automation processes. These features make ELB a scalable and relatively low-maintenance solution for traffic management.

Ease of deployment and management

ELB simplifies operations by handling scaling, availability, and security automatically. It can be managed through various interfaces, including the AWS Management Console, CLI, SDKs, or a low-level Query API. To avoid accidental deletions, the service includes deletion protection. For optimal scaling with Application and Classic Load Balancers, AWS recommends using subnets with a minimum size of /27, ensuring at least eight free IP addresses per subnet.

Integration with cloud platforms (AWS, Azure, GCP, etc.)

ELB integrates seamlessly with AWS services such as EC2, Auto Scaling, ECS, EKS, Lambda, and WAF. For Kubernetes workloads, the AWS Load Balancer Controller supports direct-to-pod load balancing for EKS and Fargate clusters. Additionally, integrating with AWS Certificate Manager simplifies SSL/TLS certificate management and termination.

That said, ELB is specifically designed for AWS, and its routing rules and security settings don't easily carry over to other cloud platforms. This is an important consideration for organisations operating in multi-cloud environments.

Traffic routing and health monitoring capabilities

Application Load Balancers provide advanced traffic routing options, including URL paths, hostnames, HTTP headers, query parameters, and source IP addresses. On the other hand, Network Load Balancers use a flow hash algorithm, which incorporates protocol, IP addresses, ports, and TCP sequence numbers to efficiently process millions of requests with minimal latency.

ELB also conducts continuous health checks using protocols like HTTP, HTTPS, TCP, and gRPC. Features such as zonal shifts allow traffic to be redirected from impaired Availability Zones, while connection multiplexing ensures consistent service reliability.

Additionally, the default DNS Time-to-Live (TTL) setting of 60 seconds enables quick IP address remapping to adapt to changing traffic patterns. Cross-zone load balancing further ensures even traffic distribution across all availability zones, promoting system stability and performance.

5. Google Cloud Load Balancer

Google Cloud Load Balancer (GCLB), powered by Maglev, operates from more than 202 global points of presence and handles traffic effortlessly, whether it's for a handful of users or millions. Designed to work in hybrid setups, it integrates smoothly with on-premises and multi-cloud environments. By using a single anycast IP, GCLB manages backend instances worldwide, simplifying DNS processes and directing traffic intelligently to the nearest healthy backend across regions or clouds.

Scalability across hybrid and multi-cloud environments

GCLB supports hybrid cloud setups through Hybrid Connectivity Network Endpoint Groups (NEGs). These allow on-premises data centres or other public cloud platforms to act as backends, provided they are accessible via Cloud VPN or Cloud Interconnect. This means you can seamlessly incorporate resources from AWS, Azure, or your own data centre alongside Google Cloud services. To minimise latency when setting up hybrid NEGs, it's best to select a Google Cloud zone closest to your external data centre.

The system uses the open-source Envoy proxy, enabling advanced traffic management that fits well within the Envoy ecosystem. With weight-based traffic splitting, you can gradually shift traffic between on-premises environments and Google Cloud without altering the frontend configuration. This flexibility ensures a straightforward and secure deployment process.

Ease of deployment and management

GCLB isn't just about scalability - it simplifies deployment and ensures consistent security across hybrid setups. As a fully managed service, it takes care of scaling, availability, and security automatically, reducing operational workload. Security measures like Google Cloud Armor and Cloud CDN policies apply uniformly across all hybrid backends, maintaining a consistent security framework wherever your workloads are located. Additionally, enabling global access on the forwarding rule for regional internal load balancers allows clients from any Google Cloud region to connect seamlessly.

Traffic routing and health monitoring capabilities

GCLB optimises traffic routing by considering health, proximity, and capacity, ensuring low-latency paths in hybrid environments. It supports modern protocols like HTTP/3 and QUIC, which can speed up connection times for returning clients. Some advanced features include content-based load balancing, request mirroring, and model-aware routing tailored for AI/ML workloads.

Health monitoring is precise, reflecting real traffic conditions to ensure only ready backends receive connections. For hybrid configurations, you'll need to set up firewall rules to allow incoming traffic from Google's health-check probe ranges (35.191.0.0/16 and 130.211.0.0/22). Depending on your load balancer type, the system supports centralised health checks from Google's infrastructure or distributed Envoy health checks originating from proxy-only subnets.

6. Azure Application Gateway

Azure Application Gateway is a Layer 7 load balancer developed by Microsoft, tailored for Azure workloads and hybrid setups that include on-premises connectivity. The Standard_v2 SKU offers autoscaling based on real-time traffic, eliminating the need for manual scaling efforts. This version also includes zone redundancy, which distributes instances across multiple Availability Zones to enhance fault tolerance without requiring separate gateways for each zone.

Scalability across hybrid and multi-cloud environments

For hybrid deployments, Application Gateway enables back-end pools to incorporate on-premises resources or endpoints hosted in other clouds, provided they are connected via VPN or dedicated links. A standout feature of the v2 SKU is its support for static Virtual IPs (VIP), which ensures a consistent entry point - critical for hybrid connectivity and firewall configurations. Microsoft guarantees a 99.95% uptime SLA for multi-instance deployments, and the platform can manage and route traffic for over 100 websites on a single gateway using path- and header-based routing.

However, Application Gateway is inherently a cloud-native Azure service. Transferring its routing rules, security configurations, or automation scripts to platforms like AWS or GCP can be challenging, as these setups are specific to Azure and not easily portable. Organisations seeking uniformity across multiple cloud providers might need to consider additional cloud-neutral tools to bridge the gap.

The seamless integration of these scalable features with Azure's ecosystem makes it an effective choice for streamlined deployments.

Ease of deployment and management

Beyond scalability, Application Gateway simplifies operational management. It works seamlessly with Azure services such as Azure Kubernetes Service (AKS), Virtual Machine Scale Sets (VMSS), and Azure App Service. SSL certificate management is centralised via Azure Key Vault, enabling automatic renewals and reducing administrative overhead. Additionally, connection draining ensures that ongoing requests are completed before backend instances are taken offline for updates or maintenance, minimising the risk of disruptions or data loss.

"Microsoft Azure enables us to quickly respond to changing traffic on spaactor.com and withstand even large peak loads. Above all, our internet search engine for spoken content is easily scalable and available through the Azure infrastructure worldwide." - Christian Schrumpf, Founder and CEO, Spaactor GmbH

Traffic routing and health monitoring capabilities

Application Gateway combines advanced routing with proactive health monitoring. It directs traffic based on HTTP request attributes like URL paths (e.g., /images versus /video) and host headers. It also supports session affinity through gateway-managed cookies, which is particularly useful for applications that store session data locally. Features such as HTTP header and URL rewriting allow organisations to enforce security measures like HSTS. For monitoring, it integrates with Azure Monitor and Azure Security Center, offering a unified dashboard for real-time backend health tracking and alerts. In global scenarios, pairing Application Gateway with Azure Traffic Manager enables DNS-based global routing, while Azure Front Door provides web acceleration and global load balancing.

7. Citrix ADC (formerly NetScaler)

Citrix ADC takes a distinct approach to hybrid load balancing by offering a unified solution that works seamlessly across various environments. Whether it's physical hardware (MPX/SDX), virtual machines (VPX), bare metal (BLX), or containers (CPX), the platform ensures consistent deployment and operation. This flexibility allows organisations to maintain uniform processes, whether they're working on-premises, in private clouds, or across public cloud providers like AWS, Azure, and GCP. A single code base simplifies management across these diverse setups.

Scalability across hybrid and multi-cloud environments

Citrix ADC employs GSLB (Global Server Load Balancing) to distribute workloads between on-premises infrastructure and public cloud regions. Its Metrics Exchange Protocol (MEP) enables real-time performance data sharing, supporting dynamic and efficient load balancing.

The platform supports both active-active configurations for resource optimisation and active-passive setups for disaster recovery. For organisations exploring cloud migration, the hybrid GLB feature allows a phased approach by routing a small portion of traffic to cloud instances before committing fully. Additionally, Citrix ADC integrates with AWS Outposts, providing low-latency solutions by acting as a local extension of an AWS Region.

Traffic routing and health monitoring capabilities

Citrix ADC offers a variety of traffic routing methods, including:

- Metric-based options: Such as Least Connection, Least Bandwidth, and Least Packets.

- Non-metric strategies: Like round robin and source IP hash.

- Proximity-based methods: Including Round-Trip Time (RTT), which measures the delay between the client DNS and the data centre to determine the best site.

For example, the Least Packets method evaluates traffic based on the smallest number of packets received.

"Citrix ADC GSLB can be deployed in an active-active configuration across multiple sites in a hybrid cloud with AWS set up... to globally steer traffic across a distributed environment." - Arvind Kandula, Principal Product Manager, Citrix

To simplify management, the NetScaler Console (formerly ADM) provides a centralised interface for overseeing instances across different locations. It also features "StyleBooks", which enable template-based, automated configurations. Starting from 15th April 2026, Citrix will require the use of its cloud-based Licensing Activation Service (LAS), retiring traditional file-based licences to further streamline hybrid deployments.

8. Traefik

Traefik stands out as a modern solution for hybrid load balancing, designed to adapt dynamically to changing environments. With features like automatic service discovery and real-time configuration updates, it’s well-suited for hybrid setups. Impressively, it has surpassed 3.3 billion downloads and boasts over 55,000 stars on GitHub. By using API Providers, Traefik detects service changes and updates routing configurations automatically, ensuring seamless operation in dynamic systems.

Scalability across hybrid and multi-cloud environments

Traefik’s ability to scale across various environments is a key strength. Its unified runtime layer standardises traffic management and policy enforcement across Kubernetes clusters, virtual machines, and even bare metal servers. This makes it an effective ingress controller for both modern and older systems.

"The unified runtime layer is no longer optional; it is the foundation that makes everything else possible."

For environments that require auto-scaling, Traefik employs the Power of Two Choices (P2C) algorithm. This method selects two random servers and routes traffic to the one with fewer active connections, helping balance the load efficiently as servers are added or removed. Its multi-layer architecture ensures routing consistency across major cloud platforms like GKE (Google Cloud), ECS (AWS), and VMs (Azure), making it a strong choice for hybrid cloud setups.

Ease of deployment and management

Traefik simplifies deployment with its fully declarative, GitOps-driven model, allowing infrastructure configurations to be managed through version-controlled code in formats like YAML, TOML, or container labels. For teams transitioning from tools like ingress-nginx, Traefik supports existing annotations, reducing the need for significant reconfiguration. Its auto-discovery feature dynamically updates configurations, making management straightforward and efficient.

Integration with cloud platforms (AWS, Azure, GCP, etc.)

Traefik seamlessly integrates with leading cloud platforms, including AWS, Azure, and Google Cloud. The Enterprise version enhances this with advanced security integrations, such as HashiCorp Vault and Azure Key Vault, for robust key management. Additionally, Traefik adapts to the transient nature of cloud-native containers, dynamically updating routes as containers are added or removed.

Traffic routing and health monitoring capabilities

Traefik supports flexible traffic routing at both Layer 4 (TCP/UDP) and Layer 7 (HTTP/gRPC), offering various load balancing strategies. The Weighted Round Robin (WRR) approach is ideal for evenly matched servers with consistent workloads, while the Least-Time strategy directs traffic to the fastest backend, making it suitable for mixed infrastructure setups. For stateful backends, the Highest Random Weight (HRW) strategy ensures consistent server selection, improving cache efficiency.

Traefik also supports advanced deployment techniques like canary releases, blue-green deployments, and traffic mirroring, allowing teams to test updates without disrupting user experience. These capabilities make it a versatile tool for managing traffic and maintaining system health in complex environments.

9. Kemp LoadMaster

Kemp LoadMaster is a hybrid cloud load balancing solution that has been deployed in over 100,000 setups worldwide. Known for its competitive pricing, it provides a 10TB throughput deployment with WAF for approximately £119 per month – a stark contrast to Azure Application Gateway, which costs around £263. The solution has earned impressive user ratings, including 4.9/5 on Gartner Peer Insights, 4.7/5 on G2, and 8.1/10 on TrustRadius. This affordability makes it an appealing option alongside other tools discussed in this article.

Scalability across hybrid and multi-cloud environments

Kemp LoadMaster is designed to scale efficiently in hybrid cloud setups. It uses Global Server Load Balancing (GSLB) to route traffic based on factors like proximity and server availability. Its "cloud bursting" feature automatically shifts traffic from on-premises servers to public cloud resources when certain KPIs, such as CPU usage or response times, exceed predefined thresholds. The platform also offers a pooled licensing model, enabling businesses to flexibly allocate capacity across virtual instances on any supported cloud or hypervisor. This setup supports both horizontal and vertical scaling without being tied to fixed resources.

Ease of deployment and management

The LoadMaster 360 centralised management console simplifies operations by offering analytics, telemetry, and certificate lifecycle management across hybrid cloud setups. Pre-configured templates for platforms like Exchange, Dell EMC, and ADFS streamline deployment processes. Additionally, REST API and PowerShell support make it easy to integrate with DevOps workflows. A verified user, Michael B., shared his experience:

Deployment and initial setup is straight forward. The product is really amazing and make IT's life easy.

Integration with cloud platforms (AWS, Azure, GCP, etc.)

Kemp LoadMaster integrates seamlessly with major cloud platforms, including AWS (with GovCloud), Microsoft Azure (with Government), Open Telekom Cloud, and Orange Flexible Engine. It also supports Kubernetes Ingress Controllers. This unified interface across all deployments reduces the need for specialised platform expertise. For example, Education First (EF) deployed two VLM-2000 and six VLM-200 virtual appliances during their AWS migration. This move reduced their infrastructure management team from 30 to 4 while significantly lowering latency for thousands of student connections.

Traffic routing and health monitoring capabilities

Kemp LoadMaster combines advanced traffic routing with proactive health monitoring to ensure application reliability. It offers Layer 4–7 application-aware load balancing, allowing content-based routing using elements like URLs, headers, and cookies. Intelligent health checks redirect traffic away from underperforming instances to maintain high availability. Hardware appliances support up to 90 Gbps, while cloud deployments provide unlimited performance. ASOS’s Chief Information Officer, Clifford Cohen, praised the platform's reliability:

We had our biggest day ever with 167 million visits and the platform handled it brilliantly.

10. Loadbalancer.org

Loadbalancer.org offers a cloud-agnostic Application Delivery Controller, ensuring consistent performance across on-premises setups, AWS, and Azure environments. This uniformity simplifies transferring policies between infrastructures. The platform has received a stellar 4.93/5 rating on Gartner Peer Insights, with users frequently praising its ease of use and reliability. Ajay Patel, Senior Systems Engineer at GE HealthCare, shared:

"Loadbalancer.org's appliances are much simpler to use and quicker to deploy than other solutions in the field."

These qualities make it a strong choice for businesses pursuing a hybrid cloud strategy.

Scalability across hybrid and multi-cloud environments

Loadbalancer.org prioritises scalability, using Global Server Load Balancing (GSLB) to optimise traffic distribution across multiple data centres and cloud providers. This is done based on real-time health metrics and user locations. For high-bandwidth applications, such as AI workloads, locality-based GSLB minimises WAN costs and latency by keeping traffic within the data centre. The platform supports throughput exceeding 1 Tbps, meeting the demands of complex infrastructures. Notably, GSLB functionality is included at no extra cost, and the Freedom Licence model allows businesses to expand across environments without incurring per-instance fees.

Integration with cloud platforms (AWS, Azure, GCP, etc.)

Loadbalancer.org ensures seamless deployment across major cloud platforms. On AWS, it operates as a high-availability (HA) pair on EC2, working alongside an AWS Network Load Balancer or Application Load Balancer. For Azure, it deploys as a virtual appliance pair within a virtual network, with the Azure Load Balancer handling failover and supporting "HA ports" for multi-port applications. However, on GCP, the solution runs as a single virtual instance on Compute Engine, lacking the native HA clustering found in other environments. Despite this, the platform excels in advanced traffic management across these cloud services.

Traffic routing and health monitoring capabilities

The platform supports Layer 4 and Layer 7 virtual services, including features like content switching, URL rewrites, SSL termination, and WebSocket support. Its advanced persistence options, such as cookie-based and custom sticky sessions, go beyond the capabilities of native cloud load balancers. For backend server monitoring, its application-aware health checks provide more detailed insights than standard cloud-native probes. Marco Kemmeren, Network Engineer at Philips Healthcare, highlighted the platform's strengths:

"The bottom line is this: performance, efficiency and customer service matter - and the Loadbalancer team get that."

Tool Comparison Table

Choosing the right load balancer boils down to your infrastructure, budget, and specific technical requirements. Below is a comparison of ten popular tools, highlighting their best use cases, supported platforms, deployment options, standout features, and user ratings.

| Tool | Best Use Case | Platforms | Deployment Options | Main Features | User Ratings |

|---|---|---|---|---|---|

| F5 BIG-IP | Enterprise Kubernetes deployments needing advanced security | Kubernetes, enterprise data centres | Hardware, virtual appliance | Advanced SSL termination, commercial support | Highly rated for enterprise security |

| NGINX Plus | Microservices & Kubernetes environments | Multi-cloud, on-premises, Kubernetes | Software, virtual appliance | Real-time analytics, HTTP/3 support, API gateway, URL rewriting | Approximately £3,400/year |

| HAProxy Enterprise | High performance, low-latency workloads with AI integration | Linux, multi-cloud, Kubernetes | Software (with open-source option) | L4 and L7 load balancing, Universal Mesh, AI gateway, DDoS/WAF | Praised as "rock solid" |

| AWS ELB | EC2-heavy, cloud-native applications | AWS | Managed service | Auto-scaling, mTLS, Lambda integration | Top-rated in G2 Winter 2025 |

| Google Cloud LB | Global distributed services, high-traffic apps | Google Cloud, hybrid, multi-cloud | Managed service (Anycast) | Global anycast IP, HTTP/3 support, QUIC, AI model routing | Top-rated in G2 Winter 2025 |

| Azure App Gateway | Microsoft ecosystem, Layer 7 routing | Azure | Managed service (regional) | WAF integration, SSL termination, cookie affinity | Top-rated in G2 Winter 2025 |

| Citrix ADC | Hybrid/multi-cloud global load balancing | AWS, Azure, GCP, on-premises | Hardware, virtual, cloud | Proximity-based routing with MEP protocol, StyleBooks | Strong enterprise adoption |

| Traefik | Containerised environments | Kubernetes, Docker, multi-cloud | Software, cloud-native | Dynamic configuration, health checks | Popular for container orchestration |

| Kemp LoadMaster | Adaptive balancing & security | AWS, Azure, on-premises | Hardware, virtual, cloud | WAF, IPS, GSLB, centralised management | Praised for excellent support, as noted by Justin S. |

| Loadbalancer.org | Multi-cloud consistency | AWS, Azure, GCP, on-premises | Virtual appliance | Vendor-agnostic, full ADC feature set, GSLB | Rated 4.93/5 on Gartner Peer Insights |

The table offers a quick overview, but there are some additional points worth noting.

Cloud-native tools like AWS ELB, Google Cloud LB, and Azure Application Gateway work seamlessly within their respective ecosystems. However, this tight integration can lead to vendor lock-in. On the other hand, cloud-agnostic solutions such as Loadbalancer.org, Kemp LoadMaster, and HAProxy are better suited for hybrid environments, offering consistent functionality across multiple platforms.

The global load balancer market is expected to grow from £5.6 billion in 2025 to £15.4 billion by 2033, with a compound annual growth rate of 13.40%. This reflects the rising demand for solutions that are both scalable and flexible.

When it comes to cost and scalability, Kemp LoadMaster stands out at approximately £116/month for 10TB throughput, compared to Azure's equivalent at £257/month. NGINX Plus, while more expensive at around £3,400/year, offers robust features. HAProxy provides flexibility with its free open-source version alongside a paid Enterprise edition, catering to organisations of varying sizes.

For performance-critical scenarios, the choice of load balancer depends on the layer of operation. Layer 4 (TCP/UDP) workloads needing ultra-low latency are best handled by tools like AWS Network Load Balancer or Azure Standard LB. Meanwhile, Layer 7 (HTTP/S) routing, which involves content-based decisions, is where tools like NGINX Plus, HAProxy Enterprise, and Azure Application Gateway shine with features like advanced URL rewriting and cookie-based routing. Google Cloud Load Balancing also stands out with its default global anycast IP, while AWS and Azure require additional services like Global Accelerator or Front Door for similar global reach.

Conclusion

Choosing the right hybrid cloud load balancer isn't a matter of finding a universal solution - it’s about matching the tool to your organisation’s specific architecture and operational goals. For those deeply invested in a single ecosystem, cloud-native options like AWS Elastic Load Balancing, Azure Application Gateway, and Google Cloud Load Balancer shine with their seamless integration into services like AWS Lambda or Azure Active Directory. On the other hand, cloud-agnostic solutions such as NGINX Plus, HAProxy Enterprise, and Loadbalancer.org provide a consistent experience across multiple clouds and on-premises environments, helping to sidestep vendor lock-in.

The pace of advancement in load balancing technology also cannot be ignored. The hybrid cloud landscape is evolving quickly, with significant growth projected in the years ahead. By 2026, more than 33% of enterprise software applications are expected to leverage some form of autonomous AI. As noted by CodeBrewTools:

"Routing in 2026 is no longer about moving packets; it is about transmitting essential context".

This shift highlights the growing importance of solutions capable of adapting to AI-driven and context-aware workloads. Traditional approaches to routing are being redefined to meet the demands of these emerging use cases.

Whether your focus is on enterprise-grade security with F5 BIG-IP, cost efficiency with Kemp LoadMaster, or global scalability with Google Cloud Load Balancer, the ultimate goal should be choosing a solution that balances your current requirements with future growth. From managed services to advanced Layer 7 controls, the tools discussed here offer flexibility to suit diverse hybrid cloud architectures.

FAQs

How do I choose between a cloud-native and a cloud-agnostic load balancer?

When deciding, it comes down to your system's architecture and how you plan to scale. Cloud-native load balancers are designed to work seamlessly with a specific cloud provider. They deliver high performance and access to built-in features, making them a great choice for single-cloud environments. On the other hand, cloud-agnostic load balancers can operate across multiple cloud providers or hybrid setups, offering flexibility and helping to avoid being tied to one vendor. If you’re looking for simplicity and staying within one platform, cloud-native is the way to go. For multi-cloud strategies or preparing for possible migrations, cloud-agnostic is the smarter option.

Do I need Layer 7 load balancing, or is Layer 4 enough?

When deciding between Layer 4 (transport layer) and Layer 7 (application layer) load balancing, it all comes down to what you need. Layer 4 is faster and works well for straightforward traffic distribution, making it a great choice for high-performance requirements. On the other hand, Layer 7 offers more advanced capabilities, such as SSL termination and routing based on specific content, which is ideal for complex applications or heightened security demands.

In hybrid cloud environments, a mix of both might be the best approach: Layer 7 for handling intricate applications or securing sensitive data, and Layer 4 for simpler, speed-focused tasks.

How can I avoid vendor lock-in in a hybrid cloud setup?

To steer clear of vendor lock-in in a hybrid cloud setup, consider using open-source tools that can operate across various cloud providers and on-premises systems. For example, tools like OpenTofu and Pulumi allow you to manage infrastructure as code, giving you the flexibility to work with different platforms while minimising dependence on a single vendor.

Additionally, adopting vendor-neutral load balancing solutions can streamline traffic management across hybrid and multicloud environments. These solutions help maintain scalability and performance without being restricted to proprietary platforms.

Lets grow your business together

At Antler Digital, we believe that collaboration and communication are the keys to a successful partnership. Our small, dedicated team is passionate about designing and building web applications that exceed our clients' expectations. We take pride in our ability to create modern, scalable solutions that help businesses of all sizes achieve their digital goals.

If you're looking for a partner who will work closely with you to develop a customized web application that meets your unique needs, look no further. From handling the project directly, to fitting in with an existing team, we're here to help.