AIAccountability:WhatLeadersNeedtoKnow

2026-04-09

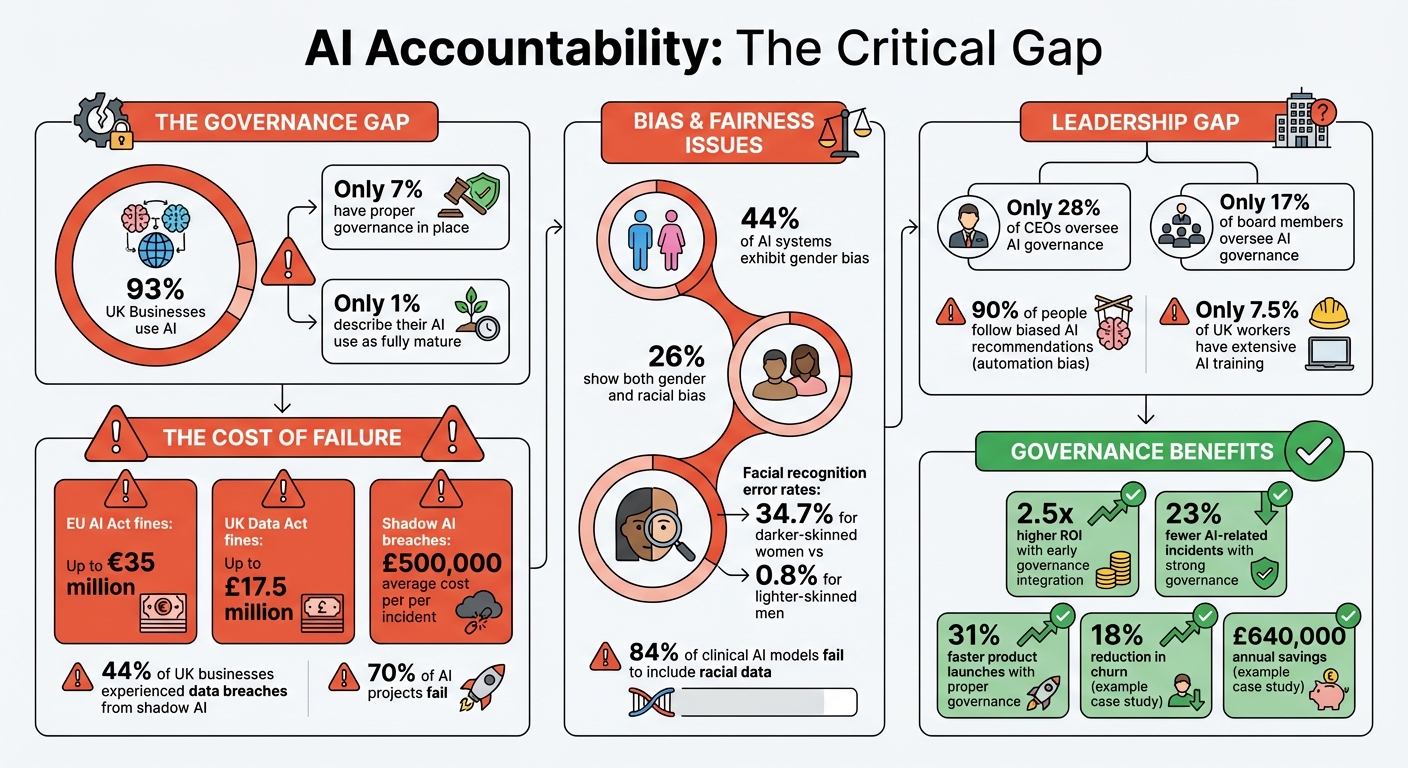

AI is everywhere - 93% of UK businesses use it. Yet, only 7% have proper governance in place. This gap is risky, especially with stricter regulations like the EU AI Act (effective August 2026) and the UK’s Data (Use and Access) Act 2025. These laws impose fines up to €35m or £17.5m for non-compliance. Beyond fines, unchecked AI can lead to biased decisions, data breaches, and reputational damage.

Key Takeaways:

- Why It Matters: AI accountability ensures ethical, legal, and transparent decision-making.

- Leadership Role: Senior managers must oversee AI governance, not just technical teams.

- Core Principles: Reduce bias, improve transparency, and maintain human oversight.

- Action Steps: Define AI use cases, assign responsibility, and regularly test, document, and train teams.

With proper governance, AI becomes a tool for growth, not a liability. Leaders must act now to ensure compliance, reduce risks, and build trust.

AI Accountability Statistics: Governance Gap and Business Impact

Core Principles of AI Accountability

Building accountability into AI systems requires integrating fairness, openness, and human oversight from start to finish. These principles are what determine whether AI becomes a valuable tool or a regulatory headache.

Reducing Bias in AI Systems

Bias in AI isn't just an ethical issue - it’s a serious business risk. A study of 133 AI systems across various industries revealed that 44% exhibited gender bias, while 26% showed both gender and racial bias. In one example, facial recognition systems had error rates of 34.7% for darker-skinned women, compared to just 0.8% for lighter-skinned men. Such disparities can lead to discriminatory outcomes, like unfair hiring practices or biased loan approvals, which can undermine public trust and cause significant reputational harm.

Addressing bias requires action throughout the AI lifecycle - from defining the problem to retiring outdated models. A Data Protection Impact Assessment (DPIA) is a good starting point. This process helps identify "allocative harms" (unequal resource distribution) and "representational harms" (reinforcing stereotypes) before they become embedded in your system.

Bias mitigation typically involves three steps: improving training data, refining learning algorithms, and recalibrating outputs. Each method comes with trade-offs, so the best approach depends on your specific needs and legal obligations.

One concerning statistic: 84% of clinical AI models fail to include racial data, making it difficult to evaluate fairness. To address this, establish a model registry to document model performance, ensure reproducibility, and enable audits.

Bias isn’t a one-time issue. Over time, demographic changes can cause "concept drift", introducing new biases. Regular reviews and participatory design - engaging individuals with relevant lived experiences or their representatives - can help uncover societal biases that technical teams might miss.

By tackling bias proactively, organisations can create AI systems that make clearer, more impartial decisions.

Making AI Decisions Transparent

Transparency is key to building trust in AI, but it doesn’t mean exposing every detail of your code. As Kevin Werbach from Wharton’s Accountable AI Lab explains:

"If a decision can't be explained, it can't be trusted".

The goal is to clearly communicate how decisions are made without compromising sensitive information.

A tiered explanation approach works well. Provide technical teams with detailed documentation for audits, while offering the public a simplified version. This satisfies regulatory demands while safeguarding proprietary data.

Focus on two types of explanations:

- Process-based: Explain governance and design choices to show the system is responsibly managed.

- Outcome-based: Clarify specific decisions in plain language. For example, if a loan is denied, outline the factors involved - like credit history or income - without revealing algorithmic details.

Tools like model cards or "datasheets" can help. These documents summarise how models perform under different conditions and describe their training data context. They’re a practical way to inform stakeholders about a model’s limitations and appropriate uses.

JP Morgan offers a real-world example of transparency in action. In April 2025, the company’s Chief Information Security Officer issued a public letter to third-party suppliers about responsible AI practices. Their governance framework includes a dedicated team of over 20 staff members for model risk, with the head of AI policy reporting directly to the CEO. This setup highlights how transparency isn’t just about documentation - it’s also about having clear organisational structures and accountability.

Transparency lays the groundwork for essential human oversight, especially in high-stakes scenarios.

Maintaining Human Oversight

Even the most advanced AI systems need human oversight, especially when decisions involve ethical or contextual nuances. Unlike humans, AI struggles to interpret subtlety or adapt to unexpected situations. Zillow’s 2021 pricing algorithm failure, which led to a 6.9% median error rate and a £304 million loss, is a stark reminder of what can happen when AI operates unchecked.

AI mistakes can escalate quickly. While human errors are often isolated, flawed AI models can cause widespread, systemic failures that impact millions in real-time. Despite this risk, only 28% of CEOs and 17% of board members currently oversee AI governance.

To reduce these risks, organisations should adopt a human-in-the-loop (HITL) system. This ensures that a responsible person reviews and validates AI decisions, particularly in sensitive areas like healthcare, hiring, or finance. Mastercard, for instance, created an AI Governance Council to oversee AI initiatives. They’ve also partnered with the Quebec Artificial Intelligence Institute (Mila) to integrate research on bias testing into their operations.

However, human oversight alone isn’t enough. Experiments show that people follow biased AI recommendations 90% of the time, a phenomenon known as "automation bias". To counter this, users need training to critically evaluate AI outputs and understand their limitations.

It’s also important to establish clear escalation procedures. Employees should feel safe reporting AI-related concerns without fear of retaliation. Assigning specific individuals or teams to oversee each system’s behaviour ensures accountability. These teams should have the authority to pause deployments if issues arise.

As Professor Saharsh Agarwal from ISB aptly notes:

"The next era of leadership will be defined not by how well executives deploy AI, but by how well they discipline it".

sbb-itb-1051aa0

How Leaders Can Build AI Accountability

Deploying AI successfully is no small feat, especially when 42% of projects fail due to confusion around implementation and governance. Organisations that integrate governance early on see a return on investment 2.5 times higher than those that don’t. To make AI accountability part of your strategy from the outset, here’s what you need to focus on.

Define Use Cases and Set Limits

Before rolling out any AI system, you need to clearly define its purpose and boundaries. This means creating an inventory that outlines every AI model in use, detailing its purpose, data sources, and the person responsible for it. Although this might seem straightforward, only 1% of UK businesses describe their AI use as fully mature.

Each use case should then be categorised by risk: minimal, limited, high, or prohibited. For instance, a chatbot answering routine customer queries may fall under minimal risk, while an AI system used for hiring or loan approvals would be considered high risk. It’s also vital to document these use cases thoroughly, specifying what the AI can and cannot do. For example, you might explicitly ban the use of facial recognition in sensitive settings. Sometimes, a simpler, non-AI solution might even be a better fit, reducing risks altogether. As Kevin Werbach from the Wharton Accountable AI Lab emphasises:

"Accountability must be designed in from the start - not bolted on after deployment".

If you’re operating in Europe, keep in mind that the EU AI Act will be fully enforced by August 2026. To prepare, consider a phased approach: spend the first couple of weeks creating your inventory, dedicate the next month to defining your framework, and then use the following months to integrate controls into your workflows.

Once you’ve established boundaries, assigning clear ownership is the next critical step.

Assign Responsibility and Ensure Clarity

AI systems don’t operate in a vacuum - people are ultimately responsible for their behaviour and impact. Each AI system should have a designated owner, whether that’s an individual or a team. The structure will depend on your organisation’s size. For instance:

- In micro-businesses (1–9 employees), the founder or operations lead should oversee AI policies and the inventory.

- Small businesses (10–49 employees) may appoint an AI Lead to handle training and approvals.

- Medium-sized organisations (50–249 employees) should consider an AI Officer who reports to the board quarterly.

Companies like Salesforce and JP Morgan have centralised AI oversight by establishing dedicated teams that report to senior management.

However, assigning titles isn’t enough. Decision-making authority must also be clearly defined. Who approves new AI initiatives? Who can escalate issues? Who has the power to deactivate a system if needed?. These questions matter because the stakes are high - 44% of UK businesses have experienced data breaches due to "shadow AI", with each breach costing an average of £500,000. A survey conducted in February 2026 by Red Eagle Tech found that 70% of employees without clear AI guidelines avoid using the technology altogether, inadvertently leaving more risk-tolerant colleagues with a productivity advantage.

To mitigate these risks, establish a streamlined approval process, aiming for a one-week turnaround for standard tools. As Cory Smith, a Fortune 100 Innovation Leader, puts it:

"AI doesn't remove responsibility from leaders. It concentrates it".

Once roles and rules are in place, the focus should shift to continuous testing, documentation, and training.

Test, Document, and Train Your Team

Ongoing testing, thorough documentation, and consistent training are the backbone of effective AI governance. Testing should be a continuous process, using tools like Fairlearn or AI Fairness 360 to audit for bias. Performance metrics - such as accuracy, latency, and drift - should be monitored via production dashboards. Red-teaming exercises can also help identify vulnerabilities, such as chatbots inadvertently exposing sensitive data.

Documentation is equally important. Maintain an up-to-date inventory of your AI systems, along with model cards and data factsheets. For high-risk systems, conduct Data Protection Impact Assessments to address potential risks to individual rights. A cautionary tale comes from the 2025 UK tribunal case, Oxford Hotel Investments Ltd v Great Yarmouth Borough Council, where fabricated legal citations generated by an AI system caused significant problems.

Training is another area where many organisations fall short. With only 7.5% of UK workers having extensive AI training, even brief 30-minute sessions can make a big difference. Start with the basics, like acceptable use policies, data handling protocols, and incident reporting procedures. Executives should understand risks like drift, hallucinations, and intellectual property concerns, while data teams need to be skilled in governance, bias detection, and privacy techniques. Organisations with stronger AI governance report 23% fewer AI-related incidents and bring products to market 31% faster.

Regular reviews are also essential. Conduct monthly checks for new tools and near-misses, update your inventory and risk register quarterly, and perform annual strategic reviews to ensure your framework aligns with business goals. As Cory Smith warns:

"You cannot scale what you cannot explain, govern, monitor, and defend".

Working with Experts for Scalable, Accountable AI Solutions

Navigating the complexities of internal AI governance can be daunting. In fact, 70% of AI projects fail when organisations focus too heavily on technology while neglecting the actual business problems they aim to solve. Many companies struggle to align their strategic goals with the technical execution required to meet them. This is where expert partnerships come into play, offering practical, hands-on solutions. By embedding within your team, specialised partners can transform intricate requirements into operational systems that adhere to governance standards right from the start. This approach complements leadership-driven accountability by addressing the technical challenges of integration.

Benefits of Expert Partnerships

The right partner doesn’t just provide strategy - they integrate with your team to manage everything from initial diagnosis to full deployment. This ensures that the focus stays on solving real business challenges, avoiding the trap of investing in flashy tools that lack practical value.

Take, for example, Antler Digital’s work with a SaaS client. They developed a customer retention early-warning system that combined product analytics and support transcripts. This system identified at-risk customers before renewal and pushed personalised “save-offers” into an internal success console linked with HubSpot. The result? An 18% reduction in churn. In another case, Antler Digital helped a business detect cost leakage by mapping operational touchpoints. They created a system that automatically adjusted supplier allocations when live data flagged shipping delays, saving the company £640,000 annually.

Expert partners also ensure compliance with regulatory standards. From GDPR adherence to end-to-end encryption and detailed audit logging, these specialists make sure every AI action is fully traceable and explainable. With the EU AI Act set to be fully enforced by August 2026, compliance is not just important - it’s mandatory for UK businesses whose AI systems interact with the EU.

These examples highlight how expert partnerships provide both technical solutions and governance support to help businesses succeed.

What Antler Digital Offers

Antler Digital focuses on creating autonomous workflows and AI integrations for small and medium-sized enterprises (SMEs) across industries like FinTech, SaaS, and environmental platforms. Their approach follows a phased roadmap:

- Diagnosis (4–6 weeks): Identifying the problem and assessing feasibility.

- Proof-of-concept (4–8 weeks): Testing solutions at a smaller scale.

- Full implementation (3–9 months): Rolling out the validated solution.

This phased process ensures that technical feasibility is confirmed before significant resources are committed.

Their technology stack includes tools like LangChain and Mastra AI for orchestration, Pinecone and Qdrant for vector databases, and large language models such as GPT-5, Claude, and the Llama families. Security is a top priority, with measures like prompt injection protection and secure deployment pipelines built into their processes.

Antler Digital’s expertise is reflected in client success stories. Gabriele Sabato, CEO and Co-Founder of Wiserfunding, shared:

"My working relationship with Sam and Antler team has been ongoing for over three years... We'd recommend the team to others looking for talent to take their product to the next level".

Jeremy Taylor, CTO at Wiserfunding, added:

"The team at Antler Digital was able to take our complex ideas and turn them into a functional and user-friendly SaaS app... We love working with them as an in-house team where they bring the expertise we needed".

For businesses aiming to implement AI while ensuring accountability, partnering with experts who understand both the technical and governance aspects can make all the difference. These collaborations help organisations move from failed pilots to systems that deliver real, measurable results - all while maintaining trust and compliance throughout the AI lifecycle.

Conclusion: Moving Forward with Responsible AI

AI accountability is more than just a safeguard - it’s a leadership skill that transforms AI from a potential risk into a long-term asset. Cory Smith, Innovation Leader, captures this sentiment perfectly:

"Responsible AI isn't a constraint on innovation. It's the condition that allows innovation to last".

The data backs this up. Organisations with strong AI governance report 23% fewer AI-related incidents and roll out new capabilities 31% faster. These numbers highlight the importance of clear, decisive leadership.

To move forward effectively, leaders should prioritise three key actions. First, assign clear human accountability for every AI system’s behaviour and its outcomes. Second, ensure AI decisions are explainable to non-experts - if you can’t explain a decision, it’s impossible to trust it. Finally, actively monitor for bias and performance issues to catch problems before they escalate.

For smaller businesses, this might mean starting with simple steps: drafting a one-page acceptable use policy, maintaining a list of approved tools, and designating a senior manager to oversee AI-related decisions. Larger organisations, on the other hand, should establish cross-functional oversight teams that include experts from legal, risk, ethics, operations, and beyond - not just data scientists.

The regulatory environment is also becoming stricter. The EU AI Act, which will be fully enforced by August 2026, makes compliance mandatory for UK businesses whose AI systems interact with the EU. Failure to comply could lead to fines as high as €35 million or 7% of global annual turnover. But accountability isn’t just about avoiding fines - it’s about building systems that employees trust, customers embrace, and regulators approve.

Partnering with experts who understand both the technical and governance aspects of AI can help close the gap between ambition and execution. For example, Antler Digital’s phased approach - from initial diagnosis to proof-of-concept and full-scale implementation - ensures accountability is embedded right from the start. This approach tackles technical and governance challenges side by side.

Ultimately, accountability is the cornerstone of responsible AI. As Saharsh Agarwal, Assistant Professor at ISB, puts it:

"The next era of leadership will be defined not by how well executives deploy AI, but by how well they discipline it".

FAQs

Do we need to comply with the EU AI Act if we’re a UK business?

UK businesses need to align with the EU AI Act if they offer services within Europe or are involved in EU client supply chains. The Act applies to any AI systems available in the EU market, regardless of where the provider is based. Full compliance will be required by August 2026.

What counts as a “high-risk” AI use case in practice?

A "high-risk" AI use case refers to situations where AI is applied in sensitive areas such as employment decisions, credit assessments, education, law enforcement, critical infrastructure, or determining eligibility for public services. These applications demand strict adherence to regulatory standards, which includes having documented risk management systems, ensuring human oversight, and maintaining thorough technical documentation.

What’s the simplest AI governance setup we can start with this month?

For small and medium-sized enterprises (SMEs) in the UK, setting up AI governance doesn't have to be overwhelming. Here's a straightforward approach that can be implemented in just 30 days:

- Create an approved tools list: Identify and document which AI tools are allowed for use in your business.

- Draft a one-page AI use policy: Outline clear and concise guidelines for how AI should (and shouldn’t) be used within your organisation.

- Appoint a responsible owner: Assign someone to oversee AI governance and ensure compliance with the policy.

- Maintain a basic risk register: Keep a simple log of potential risks associated with AI use and how to address them.

- Conduct staff awareness training: Train your team to understand AI’s benefits, limitations, and responsible usage.

- Set a quarterly review cycle: Regularly assess your AI practices and update policies as needed.

This no-fuss approach keeps things practical and scalable, ensuring your business can use AI responsibly without getting bogged down in overly complex frameworks.

Lets grow your business together

At Antler Digital, we believe that collaboration and communication are the keys to a successful partnership. Our small, dedicated team is passionate about designing and building web applications that exceed our clients' expectations. We take pride in our ability to create modern, scalable solutions that help businesses of all sizes achieve their digital goals.

If you're looking for a partner who will work closely with you to develop a customized web application that meets your unique needs, look no further. From handling the project directly, to fitting in with an existing team, we're here to help.